By Oluwafemi Oluseki

Each month, in the African tech community, in both engineering and finance departments, there is the same apprehension: the AWS, Google Cloud, or Azure bill is coming through. In the macroeconomic environment, startup businesses make money in local currencies and pay in US dollars to use cloud computing infrastructure.

These circumstances entail that infrastructure efficiency is no longer a technical engineering measure, but a vital survival tool. The era of growth at any cost has been fiercely substituted by strict unit economics.

Nonetheless, even with all this pressure to optimize, engineering teams are actively bleeding money at an immense rate in an approach that appears to be inevitable: over-provisioning. We spin up bigger servers than we require, provide much more CPU and memory than our applications use, and maintain large, bloated Kubernetes clusters. Why? Because the alternative is an application crash during a marketing push, a broken transaction during a payday rush, or a broken app when a prime-time event happens, which is completely intolerable. Fear of discontinuing hyperscalers is costing us a huge sum of money just so we can have a good night’s sleep.

It is time to put an end to this. CloudOps desperately requires a transition of resource constraints from being manual and reactive to being autonomous and predictive.

The Illusion of Traditional Auto-Scaling

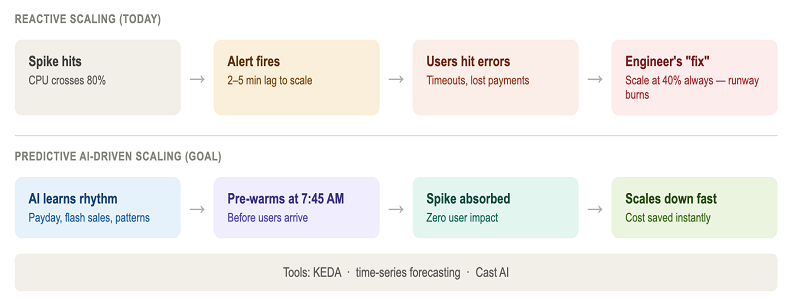

You may be asking, “Hey, we have auto-scaling! We aren’t over-provisioning.” Regrettably, the traditional auto-scaling is essentially faulty, as it is purely reactive. Regardless of whether Kubernetes Horizontal Pod Autoscalers (HPA) or simple AWS Auto Scaling Groups are used, the principle is often based on the fallacy of a simple lagging indicator: When CPU utilization reaches 80 percent, add another instance.

The crux of the matter, however, is this: You may have gone multiple minutes in time before your monitoring tool notifies you about that 80 percent CPU spike, initiates the scaling event, launches a new virtual machine or node, downloads the heavy container image, and begins to redirect traffic to new instances. In these critical minutes, your servers are redlining. Your database connections go rogue. Above all, your users are getting extreme delays, timeouts, and request errors.

DevOps engineers will just reduce the threshold or adjust the baseline to make up for architectural lag. They also set up the system to scale at 40 or 50 percent CPU load rather than 80 percent, or they have a heavily adjusted baseline of always-on nodes as an insurance policy. The result is that you are paying for a huge safety net that will only work just 10 percent of the time, simply to capture the unforeseen 10 percent spike in traffic. This way is stagnant and sets your startup’s runway on fire.

The Advancement: Autonomous, Predictive Scaling

The future trend in infrastructure operations is predictive, AI-driven compute. Rather than responding to a server spike that is already underway, how would your infrastructure respond to the spike beforehand, before the initial flood of users reaches your load balancer?

With the help of machine learning algorithms (in this case, sophisticated time-series forecasting models), we are capable of using past patterns, trends, micro-bursts, and even external data to forecast future system load with amazing precision.

A truly dynamic, AI-driven platform does three critical things:

- Discovers the Rhythm of Your Business: It consumes months of telemetry data and could unravel the fact that at 8:00 AM on the 25th of the month (payday), a Nigerian fintech app is getting an unusual influx, or that on Friday nights, just as the weekend flash sales start, an e-commerce platform is getting a huge surge.

- Warms the Infrastructure: The AI will scale up your Kubernetes clusters and warm up the required pods at specified periods, say 7:45 AM on payday. It makes sure that the compute capacity is pegged on availability to the users, and does not ask the users to wait before the capacity is ready.

- Scales Down Aggressively: Equally important is the fact that the AI can tell the moment the spike is going down. It is vigilant in scaling down the resources rather than waiting to be cooled down, and whenever they are not in demand, it reduces costs immediately.

Building the Elastic Future Today

The shift to AI-powered compute does not imply the disintegration of your current microservices architecture. The transformation occurs through the incorporation of predictive intelligence in your existing planning mechanisms. This is already being supported by the DevOps ecosystem.

We are witnessing the emergence of intelligent controllers that will be directly combined with Kubernetes to substitute standard reactive metrics with predictive algorithms. With event-driven tools such as KEDA (Kubernetes Event-driven Autoscaling) and AI operations platforms in addition to more custom metrics, engineering teams are able to construct scaling triggers using predicted queue length, predicted number of concurrent users, or historical request rates, instead of the raw and lagging CPU usage.

Reclaiming Engineering Cycles (and Runway)

The advantages of transitioning to autonomous, AI-driven compute are much more than the spreadsheets of the finance department.

The eradication of manual resource constraints and fixed cluster management takes a colossal cognitive burden off the DevOps and Site Reliability Engineering (SRE) units. Rather than playing an endless game of Tetris with server capacity, attending an endless server-capacity-planning meeting, and responding frantically to PagerDuty alerts at 2:00 AM, your engineers can work on what really matters, which is to develop resilient architecture, enhance system security, and increase developer velocity.

In the case of African startups, the mandate is straightforward. We are no longer able to afford to construct infrastructure that is bloated by design in a space that is characterized by currency volatility and intense competition. Cloud computing is not only scalable in the future, but it will also be intelligent, predictive, and mercilessly efficient.

The scaling should be left to the algorithms, the fear tax should be stopped, and teams should focus on building the product again.

ABOUT THE AUTHOR

Oluwafemi Oluseki is a Cloud Solutions Architect and Platform Engineer working across AWS and Azure, specialising in platform engineering, security, and cloud infrastructure. His work focuses on building secure, scalable platforms that improve deployment consistency and operational reliability.

He is co-founder of LimeSoft Systems, where he develops cloud-native platforms supporting modern application delivery across multiple teams and organisations. He writes and mentors within the African tech community.